| Human-Robot Interaction | Control | Egosphere | Robot Design |

Control

& Behavior Learning

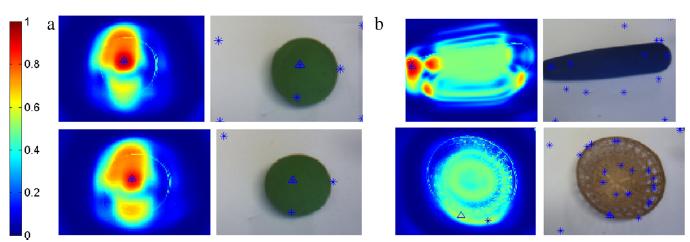

Learning Grasping points

One of the basic skills for a robot autonomous grasping is to select the appropriate grasping point for

an object. Several recent works have shown that it is possible to learn grasping points from different

types of features extracted from a single image or from more complex 3D reconstructions. In the context

of learning through experience, this is very convenient, since it does not require a full reconstruction

of the object and implicitly incorporates kinematic constraints as the hand morphology. These learning

strategies usually require a large set of labeled examples which can be expensive to obtain. In this paper,

we address the problem of actively learning good grasping points to reduce the number of examples

needed by the robot. The proposed algorithm computes the probability of successfully grasping an object

at a given location represented by a feature vector. By autonomously exploring different feature values

on different objects, the systems learn where to grasp each of the objects. The algorithm combines

betabinomial distributions and a non-parametric kernel approach to provide the full distribution for the

probability of grasping. This information allows to perform an active exploration that efficiently learns

good grasping points even among different objects. We tested our algorithm using a real humanoid robot

that acquired the examples by experimenting directly on the objects and, therefore, it deals better with

complex (anthropomorphic) handobject interactions whose results are difficult to model, or predict. The

results show a smooth generalization even in the presence of very few data as is often the case in learning

through experience.

Active learning of visual descriptors for grasping using non-parametric smoothed beta distributions, Luis Montesano and Manuel Lopes. Robotics and Autonomous Systems, Accepted available online: 26-AUG-2011, 10.1016/j.robot.2011.07.013.

Learning grasping affordances from local visual descriptors,Luis Montesano and Manuel Lopes. IEEE - International Conference on Development and Learning (ICDL), Shanghai, China, 2009.

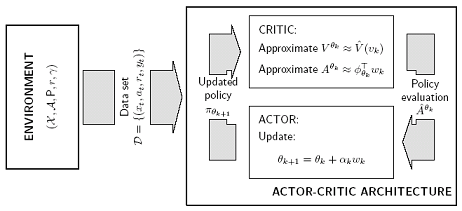

We propose a new algorithm, fitted natural actor-critic (FNAC) to allow for general function approximation and data reuse. We combine the natural actor-critic architecture with a variant of fitted value iteration using importance sampling. The method thus obtained combines the appealing features of both approaches while overcoming their main weaknesses: the use of a gradient-based actor readily overcomes the difficulties found in regression methods with policy optimization in continuous action-spaces; in turn, the use of a regression-based critic allows for efficient use of data and avoids convergence problems that TD-based critics often exhibit. We establish the convergence of our algorithm and illustrate its application in a simple continuous space, continuous action problem.

This algorithm can be used in different settings.

Learn to grasp by carefully coordinating all degrees of freedom of the hand (video) |

Improve walking gait by changing the parameters of the cycle generators that controls the walking pattern. See video of the learning progression and the robot walking around using the optimized parameters (video). Improve walking gait by changing the parameters of the cycle generators that controls the walking pattern. See video of the learning progression and the robot walking around using the optimized parameters (video). |

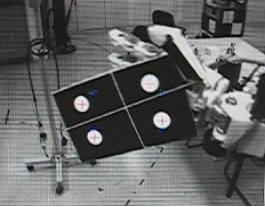

Task

Sequencing in Visual Servoing

This experiment shows Baltazar performing a 6 dof sequence of tasks.

internal view (video)

and external view (video). Also the same experiment but with an industrial robot:internal view (video) and external view (video).

Relevant publications:

Mansard et al. IROS06 (pdf)

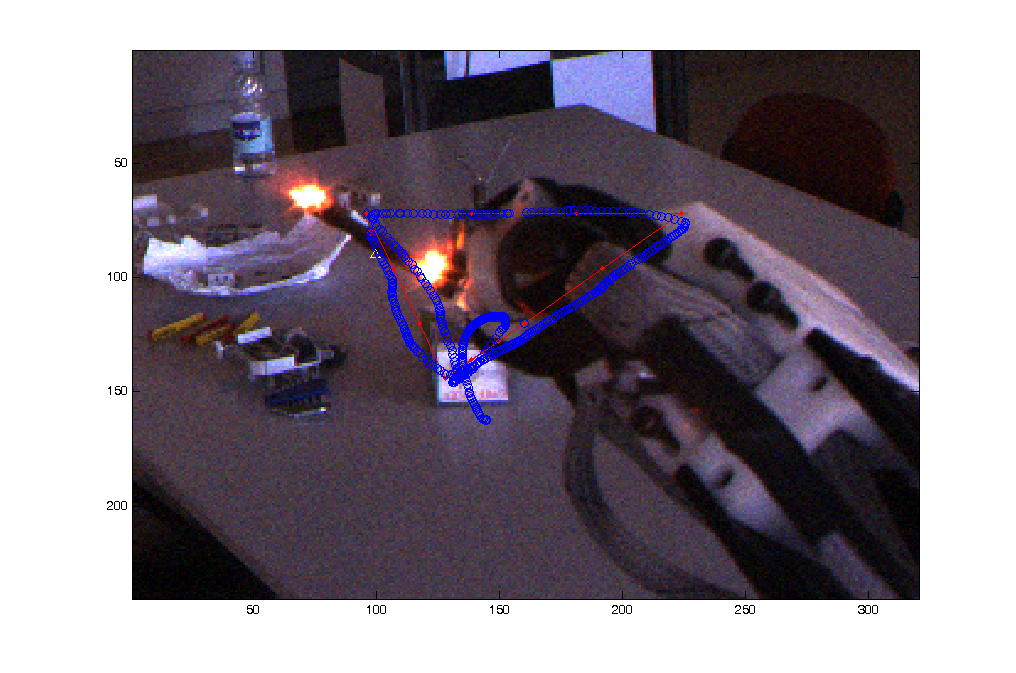

Visual Servoing

This experiment shows Baltazar movement randonmly the arm in order to estimate de visual Jacobian (video). With this Jacobian Baltazar is able to do 2D visual servoing. In (video) we can see the features extracted (2 points position and orientation) and the desired trajectory (hexagon and triangle). In (video) we can see the same movement from the other eye.

Relevant publications: Mansard et al. IROS06 (pdf)

Object Grasping

This experiment shows the capability of Grasping (video). The algorithm is based on 2 steps: the first is open-loop and uses a head-arm map moving the hand near to the object (initial position), the second step is a new algorithm that estimates online the image Jacobian and makes the final movement with visual servoing. The hand is controlled to close but because of the mechanical compliance the fingers adapt to the object.

Relevant publications:

^ up