Flowers AI & CogSci Lab

Artificial intelligence, Cognitive Sciences, Education and Scientific Discovery

icdl-epirob

Meet us @ ICDL-Epirob 2013

Five members of the Flowers Team will participate to the Thrid Joint IEE International Conference on Developmental and Learning and on Epigenetic Robotics. The conference takes place in Osaka, Japan, August 18-22.

You will meet there Fabien Benureau, Jonathan Grizou, Olivier Mangin, Clément Moulin-Frier, and Mai Nguyen. They will be happy to discuss about the latest research and future projects of the team.

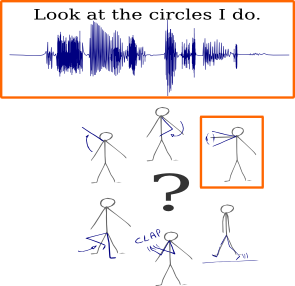

ICDL-EpiRob2013: Can robots discover spoken words and their connection to human gestures?

Next week, we will present our paper Learning Multimodal Semantic Components from Subsymbolic Perception Using NMF at the third joint ICDL-EpiRob conference in Osaka, Japan. In that article we demonstrate that it is possible to learn semantic concepts, each of which is both associated to a word in spoken utterances and a gesture, by only looking at correlations between subsymbolic representations of two modalities.

Next week, we will present our paper Learning Multimodal Semantic Components from Subsymbolic Perception Using NMF at the third joint ICDL-EpiRob conference in Osaka, Japan. In that article we demonstrate that it is possible to learn semantic concepts, each of which is both associated to a word in spoken utterances and a gesture, by only looking at correlations between subsymbolic representations of two modalities.

More details about the work can be found on that page.

Mangin O., Oudeyer P.Y., Learning semantic components from sub-symbolic multi-modal perception. to appear in the third Joint IEEE International Conference on Development and Learning an on Epigenetic Robotics (ICDL-EpiRob 2013), Osaka (Japan). [bibtex] [poster][code] [details]

ICDL-Epirob 2013: Learning how to learn

In a few week in Osaka, Japan, we will present our latest work on ways to enable robots to autonomously choose how they learn, in an article under the title of Autonomous Reuse of Motor Exploration Trajectories.

We decided to explore the idea of enabling a robot to modify its own learning method based on its previous experience. Human do the same; when studying, for instance when learning by heart a piece of knowledge, they explore different strategies: reading multiple times, rewriting, enunciating, visualizing, and repeating the learning sessions, or learning only once, the night before the test. Each individual eventually choose its preferred strategy, and tweak the specifics of it has it is reused, often based on its perceived effectiveness. Humans learn how to learn.

In our work, we focused on how a robot could improve the way it explore a new, unknown task. The exploration strategy is a important factor the learning effectiveness; work on intrinsic motivation by our team demonstrated that. And autonomous robots, while potentially subjected to very diverse situations, retain a constant morphology: their kinematics and dynamics remain stable, and their motor space, the set of possible motor commands, stays the same. The hypothesis we made was that some exploration strategies are a priori more effective for a given robot, and that those strategies can be uncovered by analyzing past learning experience, that is, past exploration trajectories. Using those exploration strategies would lead to increase in learning performance, compared to random ones.

Our experiment confirmed that. We identified, through autonomous empirical measurement, the motor commands of a first task that belonged to areas where learning had been the most effective, and then reused them on a similar, different tasks, where the robot didn’t have access the the learning experience of the first task. The early learning performance increased significantly. Our methods only requires that the motor space stays the same, the sensory space can be arbitrarily different (hence making possible to reuse an exploration strategy to learn in another modality), and doesn’t make assumptions about the learning algorithms used, which can even differ between tasks.

The article is available here here.

We released the code used to run the experiments, so that anyone can reproduce and analyze them. You can access it here

Reference: Fabien Benureau, Pierre-Yves Oudeyer, “Autonomous Reuse of Motor Exploration Trajectories“, in the proceedings of ICDL-Epirob 2013, Osaka, Japan.

ICDL-Epirob 2013: Robot Learning Simultaneously a Task and How to Interpret Human Instructions

Can a robot learn a new task if the task is unknown and the user is providing unknown instructions ?

We explored this question in our paper: Robot Learning Simultanously a Task and How to Interpret Human Instructions. To appear in Joint IEEE International Conference on Development and Learning an on Epigenetic Robotics (ICDL-EpiRob), Osaka : Japan (2013)

In this paper we present an algorithm to bootstrap shared understanding in a human-robot interaction scenario where the user teaches a robot a new task using teaching instructions yet unknown to it. In such cases, the robot needs to estimate simultaneously what the task is and the associated meaning of instructions received from the user. For this work, we consider a scenario where a human teacher uses initially unknown spoken words, whose associated unknown meaning is either a feedback (good/bad) or a guidance (go left, right, …). We present computational results, within an inverse reinforcement learning framework, showing that a) it is possible to learn the meaning of unknown and noisy teaching instructions, as well as a new task at the same time, b) it is possible to reuse the acquired knowledge about instructions for learning new tasks, and c) even if the robot initially knows some of the instructions’ meanings, the use of extra unknown teaching instructions improves learning efficiency.

Learn more from my webpage: https://flowers.inria.fr/jgrizou/